The state of the art in AI-native research, officially launched today.

For the last 12 months we have been building the most accurate search infrastructure for AI agents on the market. Today we are officially launching our DeepResearch API the research engine built on top of it.

DeepResearch is a single API that runs multi-step research across the open web, and specialised and proprietary sources, then synthesises the results into reports, structured data, and the file formats that enterprise knowledge work requires - PDF, DOCX, XLSX, PPTX. Every claim is cited. Every chart is generated from primary-source data. Every output is reproducible. Highly customisable to fit into any AI workflow, from AI startups to enterprise.

DeepResearch is in production today, fulfilling hundreds of thousands of research jobs a month for private equity, investment banks, biotechnology companies, consulting firms, government departments, and frontier AI laboratories and startups.

The thesis

We built DeepResearch for knowledge work. The work that finance, life sciences, law, consulting, government, and corporate R&D teams do every day. The work where the answer has to be right because someone is making a multi-million-dollar decision, filing a regulatory document, or publishing a clinical review off the back of it.

General-purpose search APIs were not built for this. Authoritative primary sources, a 10-K, a trial registry, an arXiv preprint, a patent, surface as links among many, with no structural access to the sections, endpoints, or claims an agent actually needs. When an agent reaches for primary data through them, retrieval collapses. The answer looks right and is wrong. Knowledge work cannot run on this.

So we built retrieval infrastructure specifically for the data classes knowledge work depends on. Financial filings, biomedical literature, economic indicators, patents, legal records, real-time markets, academic publishing. Structurally higher retrieval quality on the queries knowledge workers actually run than anything achievable through traditional web indexing alone.

Inputs are half the problem. The other half is the shape of the output. Knowledge work doesn't ship in walls of text; it ships in artefacts that other people review, sign off, file, present, model against. And it isn't always one-shot, the work needs human judgment at key points, not just a finished output dropped on the table. So DeepResearch is configurable end-to-end. Human-in-the-loop checkpoints, sandboxed code execution against retrieved data, source control, internal-document uploads. Every parameter, output, and trigger is configurable per task.

DeepResearch is the research engine built directly on top of all of this. The benchmark gap below is what that approach buys.

State of the art across every domain we benchmark

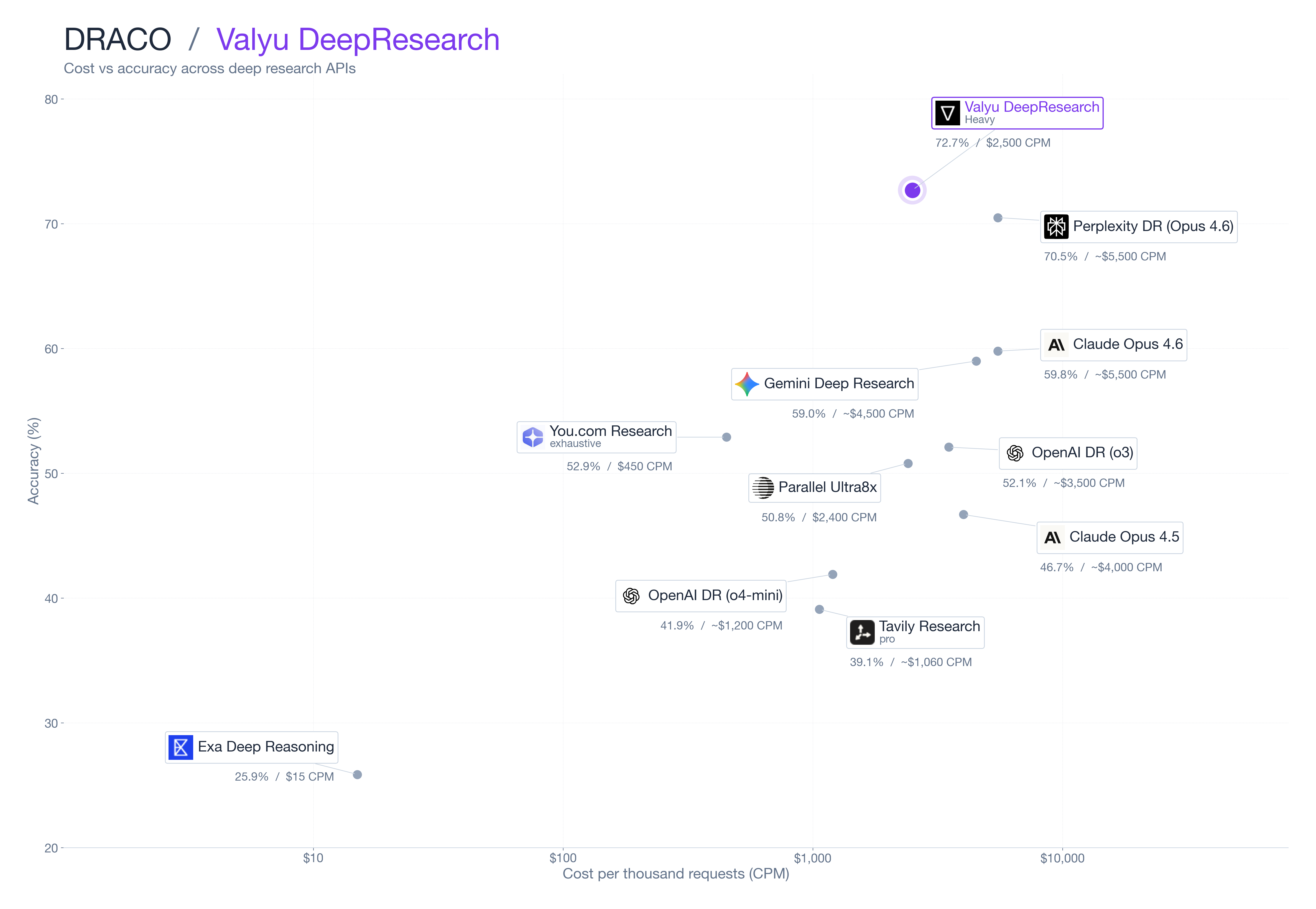

We ran DeepResearch against every other commercially available deep research API on the canonical long-form research benchmarks. Full methodology, per-task scores, judge prompts, and rerun harness: DRACO paper.

DRACO - Long-form research synthesis

DRACO is Perplexity's open expert-rubric benchmark of 100 long-form deep research tasks across 10 professional knowledge-work domains, including finance, medicine, academic research, law, and technology. Each output is graded by a per-criterion judge against a domain-expert rubric. We ran every commercially available deep research API end-to-end against the same 100 questions.

Every search and research API was tested on its highest publicly-available compute tier. Parallel (Ultra8x), You.com Research (exhaustive), Tavily (pro), Exa (deep-reasoning), and Perplexity Deep Research (Opus 4.6). Valyu was run on Heavy mode; we offer a higher Max tier but did not use it here, since Max is more expensive per task than the field.

Most published benchmarks ship without the underlying scripts that produced them, so-called "trust me bro" benchmarks. We publish ours in full. Datasets, run scripts, raw outputs, and judge evaluations are open and independently verifiable at github.com/valyuAI/valyu-benchmarks.

| System | DRACO | Cost (CPM) |

|---|---|---|

| Valyu DeepResearch (Heavy) | 72.7% | $2,500 |

| Perplexity DR (Opus 4.6) | 70.5% | ~$5,500 |

| Claude Opus 4.6 (web+code) | 59.8% | ~$5,500 |

| Gemini Deep Research | 59.0% | ~$4,500 |

| You (.) com Research (exhaustive) | 53.0% | $450 |

| OpenAI DR (o3) | 52.1% | ~$3,500 |

| Parallel Ultra8x | 51.2% | $2,400 |

| Claude Opus 4.5 (web+code) | 46.7% | ~$4,000 |

| OpenAI DR (o4-mini) | 41.9% | ~$1,200 |

| Tavily Research (pro) | 38.8% | ~$1,060 |

| Exa Deep Reasoning | 25.9% | $15 |

Valyu DeepResearch leads the field at less than half the cost of the next-best system.

Academic literature synthesis

On ScholarQA-CS, the leading academic literature synthesis benchmark published in Nature, Valyu DeepResearch achieves a 29% relative improvement over the previous state of the art (OpenScholar, jointly built by University of Washington, the Allen Institute for AI, Meta, and Carnegie Mellon).

| Benchmark | Valyu DeepResearch |

|---|---|

| ScholarQA-CS (rubric quality) | 74.5% |

| ScholarQA-Multi (Prometheus) | 4.56 / 5 |

Achieved with our lowest-compute tier.

Search layer retrieval (previously published)

DeepResearch is built on top of our search infrastructure. We previously benchmarked that retrieval layer end-to-end against general-purpose search APIs. The numbers below characterise the data layer underneath; they remain the highest published across academic, financial, economic, time-sensitive, and medical retrieval.

| Benchmark | Valyu | Parallel | Exa | |

|---|---|---|---|---|

| FreshQA | 79% | 52% | 24% | 39% |

| SimpleQA | 94% | 93% | 91% | 38% |

| Finance | 73% | 67% | 63% | 55% |

| Economics | 83% | 48% | 30% | 34% |

| MedAgent | 48% | 42% | 44% | 45% |

DeepResearch Features

DeepResearch is highly customisable and built to slot into any workflow. Use it as a standalone API for one-off research, as the retrieval-and-synthesis layer inside a larger agent system, or as the engine behind an internal research tool that connects to your existing data, document stores, and toolchain. Every parameter, output, and trigger is configurable per task.

Workflow integration

- Asynchronous by default. Submit a task, retrieve when complete - fits any orchestration layer.

- Webhooks on completion. HTTPS-only with signed payloads, ready for production event pipelines.

- Email on completion. The completed PDF lands in the recipient's inbox without any frontend work.

- Batch endpoint. Submit hundreds of queries in parallel and collect each as it completes.

- MCP integration. Connect any tool exposed via the Model Context Protocol; the agent can query internal systems, databases, and SaaS tools mid-research.

- File uploads. Pass internal documents (PDFs, spreadsheets, images, prior reports) the agent reads alongside its own retrieval.

Customisation

- Custom output schemas. Pass a JSON schema and the synthesis layer is bound to fill it - deterministic structured output for downstream pipelines, no parsing required.

- Strategy and mode parameters. Choose Fast, Standard, Heavy, or Max and tune verification rigor per task.

- Format parameters. Specify report style and audience: executive brief, technical report, slide deck, academic review.

- Source control. Restrict or include specific data sources; combine open web with specialised and proprietary corpora per query.

- Reference previous research. Chain follow-ups against the context of an earlier task.

Agent capabilities

- Human-in-the-loop checkpoints. Pause the agent at four stages of the research pipeline (planning, retrieval, synthesis, deliverables) for review, approval, or course-correction. Run autonomously by default, or wire in human review where it matters.

- Sandboxed code execution. The agent runs Python in a sandbox during research for analyses that require computation: cleaning data, recomputing claims, generating charts from underlying numbers.

- Webpage screenshots. The agent captures and embeds screenshots of source pages mid-research for visual citations and audit trails.

- Citations on every claim. Every output is grounded in primary sources, with inline citations resolvable to the exact passage retrieved. Numerical claims are verified before they enter the deliverable.

- Production-grade deliverables. Markdown, PDF, DOCX, XLSX, and PPTX outputs generated in a sandboxed code-execution environment. Spreadsheet formulas resolve. Charts render from data. Decks open cleanly in PowerPoint, Keynote, and Google Slides without manual cleanup.

Full parameter reference and integration patterns: DeepResearch quickstart guide and API reference.

Modes

| Mode | Latency | Cost (CPM) | Use case |

|---|---|---|---|

| Fast | 1-8 minutes | $100 | Focused questions, quick briefs, agent loops |

| Standard | 5-20 minutes | $500 | Research summaries, competitive intelligence, literature reviews |

| Heavy | up to 90 minutes | $2,500 | Due diligence, comprehensive analysis, regulatory and market reports |

| Max | up to 3 hours | $15 (per request) | The deepest research tasks - extensive cross-source synthesis, multi-step verification, large deliverables |

Pricing is fixed per task. Every task returns a detailed usage breakdown.

Built for two audiences

Enterprise knowledge work. DeepResearch is in production at investment firms, banks, biotech companies, consulting firms, government departments, and corporate R&D organisations - fulfilling hundreds of thousands of research jobs a month. Volume pricing, dedicated capacity, custom data source integration, audit logs, and compliance configurations are available. For complex integrations, our engineering team can work directly with yours to embed DeepResearch into your internal workflows.

Frontier AI builders. DeepResearch is the most accurate research and retrieval a layer available to teams building autonomous agents, research copilots, and AI-native applications. The API surface is designed for programmatic use: deterministic schemas, predictable per-task pricing, batch processing, and zero infrastructure to maintain. The data infrastructure underneath hybrid search across specialised and proprietary indexes is something a team building from scratch would spend years and tens of millions of dollars to replicate.

Both audiences are buying the same product. The first is using it to compress analyst hours. The second is using it to compress what otherwise would be a multi-year infrastructure program.

Representative use cases

- Investment due diligence (PE / IB / hedge funds). A single query returns an investment memo, comparable companies model, and IC deck, sourced from SEC filings, real-time market data, 13F holdings, earnings transcripts, and primary-source reporting. Use the batch endpoint to run an entire deal pipeline overnight.

- Sell-side and buy-side equity research. Daily morning notes, earnings-day batch coverage across an entire portfolio, screened idea-generation memos with macro context. Outputs land as PDF in the analyst's inbox before market open.

- Biomedical literature review and pipeline tracking. Multi-source synthesis across PubMed, bioRxiv, medRxiv, ClinicalTrials.gov, FDA drug labels, ChEMBL, DrugBank, and OpenTargets, returned as a citation-graded review with structured pipeline tracker (sponsor, indication, mechanism, primary endpoint, phase) and a presentation-ready deck.

- Pharma competitive intelligence. Continuous coverage of competitor pipelines, label expansions, regulatory milestones, and deal activity across 10-K/10-Q, EMA/FDA filings, and conference abstracts. Outputs structured for direct ingestion into internal CI dashboards.

- Corporate R&D and patent landscapes. USPTO and global patent corpus retrieval with assignee, IPC, and chronological analysis, cross-referenced against academic publishing and competitor product launches. Returned as written report, structured tracker, and trend deck for technology-scouting and freedom-to-operate work.

- Regulatory and policy monitoring. Continuous coverage of regulatory changes across UK Parliament, US Congress, FDA, European Commission, SEC enforcement actions, and case law, with affected-asset mapping when an internal portfolio is provided. Webhooks fire on every relevant filing.

- Macroeconomic and policy research. Cross-referenced analysis spanning FRED, BLS, World Bank, IMF, and central bank publications, with quantitative model deliverables.

- Agent-grade retrieval. Frontier AI applications use DeepResearch as the retrieval and synthesis layer behind multi-step agent reasoning, where text-only APIs fail to provide structured, citation-grounded primary-source data.

Code

It's as simple as this to get started:

Frequently asked questions

What is DeepResearch?

DeepResearch is an AI-native research API that runs multi-step research across the open web and proprietary sources, financial filings, biomedical literature, patents, economics, real-time markets, then synthesises results into cited reports, structured data, and PDF/DOCX/XLSX/PPTX deliverables.

How is DeepResearch different from other deep research APIs?

Most sit on a general-purpose web index. DeepResearch is built on dedicated retrieval infrastructure for each class of knowledge-work data. The result: state-of-the-art accuracy on every public benchmark we tested, at less than half the cost of the next-best system.

What benchmarks does DeepResearch lead?

DeepResearch leads three independent benchmarks. On DRACO long-form research synthesis, it scores 72.7%, ahead of Perplexity Deep Research (70.5%), Claude Opus 4.6 (59.8%), Gemini Deep Research (59.0%), OpenAI Deep Research o3 (52.1%), Parallel Ultra8x (51.2%), and Exa Deep Reasoning (25.9%), at less than half the cost of the next-best system. On ScholarQA-CS, the academic-synthesis benchmark published in Nature, DeepResearch scores 74.5%, a 29% relative improvement over the prior state of the art (OpenScholar). On ScholarQA-Multi: 4.56 / 5.

How much does DeepResearch cost?

Fixed per-task pricing across four modes. Fast: $100 CPM. Standard: $500 CPM. Heavy: $2,500 CPM. Max: $15 per request. $10 in free credits at signup, no card required.

What outputs can DeepResearch generate?

Markdown, PDF, DOCX, XLSX, and PPTX, and more. Spreadsheet formulas resolve. Charts render from primary-source data. Decks open cleanly in PowerPoint, Keynote, and Google Slides. Custom JSON schemas are also supported for structured outputs.

Get an API key - $10 in free credits, no card required.